|

I am a researcher at Google DeepMind. I completed my PhD at

Robotics Institute,

CMU, where I was advised by Dr. Oliver Kroemer. |

|

Selected Publications

-

MResT: Multi-Resolution Sensing for Real-Time Control with Vision-Language Models

Saumya Saxena*, Mohit Sharma*, Oliver Kroemer

-

RoboAgent: Towards Sample Efficient Robot Manipulation with Semantic Augmentations and Action Chunking

Homanga Bharadhwaj*, Jay Vakil*, Mohit Sharma*, Abhinav Gupta, Shubham Tulsiani, Vikash Kumar

-

RoboAdapters: Lossless Adaptation of Pretrained Vision Models for Robotic Manipulation

Mohit Sharma, Claudio Fantacci, Yuxiang Zhou, Skanda Koppula, Nicolas Heess, Jon Scholz, Yusuf Aytar

-

Search-Based Task Planning with Learned Skill Effect Models for Lifelong Robotic Manipulation

Jacky Liang*, Mohit Sharma*, Alex LaGrassa, Shivam Vats, Saumya Saxena, Oliver Kroemer

-

Inverse Reinforcement Learning with Explicit Policy Estimates

Navyata Sanghvi*, Shinnosuke Usami*, Mohit Sharma*, Joachim Groeger, Kris Kitani

-

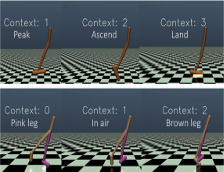

Learning to Compose Hierarchical Object-Centric Controllers for Robotic Manipulation

Mohit Sharma*, Jacky Liang*, Alan Zhao, Alex LaGrassa, Oliver Kroemer

(Long Talk)

-

Relational Learning for Skill Preconditions

Mohit Sharma, Oliver Kroemer

-

Directed-Info GAIL: Learning Hierarchical Policies from Unsegmented Demonstrations using Directed Information

Arjun Sharma*, Mohit Sharma*, Nick Rhinehart, Kris M. Kitani

Preprints/Reports

-

A Modular Robotic Arm Control Stack for Research: Franka-Interface and FrankaPy

Kevin Zhang*, Mohit Sharma*, Jacky Liang*, Oliver Kroemer

(Used by multiple labs at CMU and outside)

Other stuff

- Outside of research I enjoy reading and running.